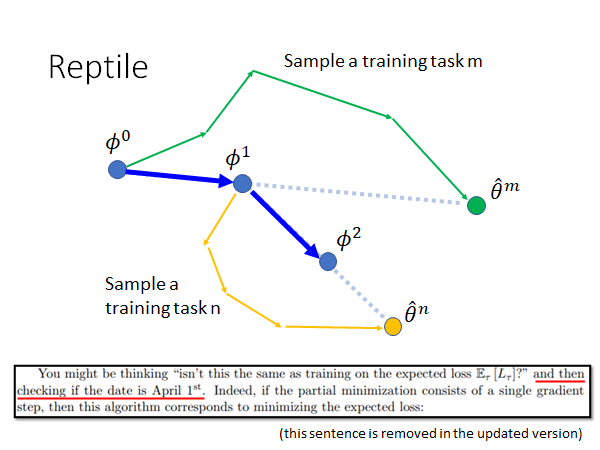

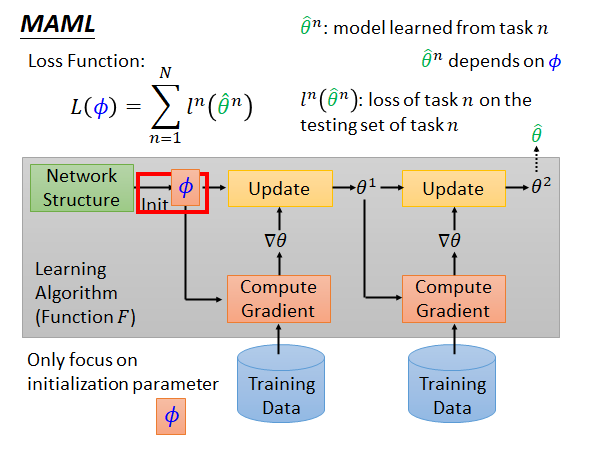

Figure 2 showcases how the proposed variant improves The improved MAML variant is called MAML++. In this blog-post, we’ll go over the MAML model, identify key problems, and then formulate a number of methodologies that attempt to solve them, as proposed in the recent paper How to train your MAML and implemented in Some of its problems and proposing solutions that stabilize the training, increase the convergence speed and improve the generalization performance. So, in order to improve gradient-based, end-to-end differentiable meta-learning models in general, we focus on MAML (which is relatively simple), identifying Then anything more complicated than that will suffer from such issues as well. However, even such a relatively parameter-light model can have instability problems depending on architecture and hyper-parameter choices. In MAML, we have a system that can achieve very strong results, with a relatively simple learning scheme composed of learning a parameter initialization for quick adaptation.

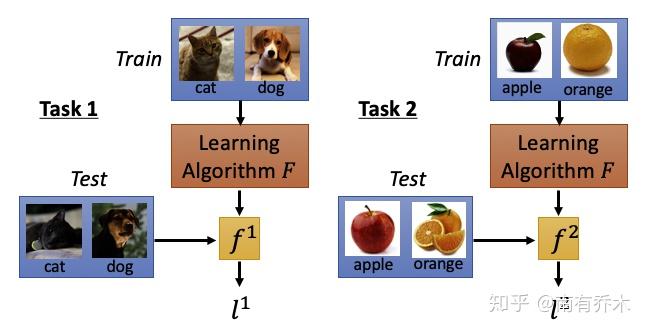

The architecture of the parameterized component. It is perhaps reasonable to assume that one of the deciding factors that make or break such systems is One possible reason for this might be that the Meta-Learner LSTM’s architecture affected its modelling capacity, thus rendering it inferior to a manually set optimizer. One would expect the learnable optimizer to outperform the manually built one. Methods from this family of meta-learning are currently in their infancy, often suffering from a variety of issues.įor example, MAML’s inner loop SGD optimizerĬan outperform the Meta-learner LSTM method, which has parameterized its update rule as an LSTM that receives gradients and predicts updates. Gradient-based, end-to-end differentiable meta-learning presents an incredible opportunity for efficient and effective meta-learning. However, gradient-based, end-to-end differentiable supervised meta-learning schemes, such as Meta-learner LSTM and Model Agnostic Meta-Learning (MAML), can be run on a single GPU, within 12-24 hours. Very computationally expensive, often requiring hundreds of GPU hours for a single experiment. Both reinforcement learning and genetic algorithms have been demonstrated to be Supervised learning, reinforcement learning and genetic algorithms. The most effective, as of the time of writing this, are Meta-learning can be achieved through a variety of learning paradigms. The target metric is the target set’s cross-entropy error. The constraints in this instance are that the model will only have access to very few data-points from each class, and a support set),Ĭontaining only 1-5 samples from each class, such that it can generalize strongly on a corresponding small validation set (i.e. Using meta-learning, one can formulate and train systems that can very quickly learn from a small training set (i.e. Process can meta-learn the parameters of such components, thus enabling automatic learning of inner-loop components.įew-shot learning is a perfect example of a problem-area where meta-learning can be used to effectively meta-learn a solution. If the models in the inner-most levels make use of components with learnable parameters, the outer-most optimization learning to transfer between tasks more efficiently). fine-tuning a model on a new dataset), whereas the outer-most level acquires across-task knowledge (e.g.

The inner-most levels acquire task-specific knowledge (e.g. Meta-learning or as often referred to learning to learn is achieved by abstracting learning into two or more levels. optimizers, loss functions, initializations, learning rates, updated functions, architectures etc.) throughĮxperience over a large number of tasks. Own ability to learn by learning some or all of their own building blocks PrefaceĮnter meta-learning, a universe where computational models, composed by multiple levels of learning abstractions, can improve their Antreas Antoniou, Harrison Edwards, Amos Storkey (2018) How to train your MAML.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed